The best way for beginners to start producing acceptable mixes is not to focus on what they should be doing but to simply avoid what they shouldn't be doing.

If you can make it past the handful of mixing mistakes most newbies make, you're no longer a newbie. And we can get you there in one easy to read discussion, called the 'Audio Mixing for Dummies' tutorial.

We'll also point out other resources here on LedgerNote that will give alternate explanations, provide additional tips and tricks, and introduce you to advanced concepts you can employ to push the quality of your mixes even further.

But for now, we're going to point out the 8 most critical audio mixing mistakes that apply to music, movies, live gigs, and anywhere else you're trying to create a balance between instruments and the environment.

We'll have to make a few assumptions:

- You have the studio hardware needed to record

- You have the computer software to record and mix

- You're familiar with digital audio workstation effects like reverb and EQ

- You have headphones at least and preferably listening monitors as well

Even with the above four points, it's okay if you're still new. Even if you're unsure of some things, keep reading and getting exposed. Repeated exposure is how you'll learn and click all the puzzle pieces together. With that being said, let's get started!

Falling prey to the following fundamental mixing mistakes is the best way to have your clients, listeners, and mastering engineers all jumping down your throat. Dodge these problems, implement these other audio mixing tips, and you'll be well on your way to mixing enlightenment.

For the 8 dummy mixing mistakes, we'll point you in the right direction for a full explanation on the solutions, but won't cover them completely here. You don't need perfection.

Applying a quick fix will show you how important it is to dodge these problems and you can study how to take the answers to the next level later on. Our goal is to introduce you to the problems and solutions first.

1. The Main Audio Mixing Mistake: Muddy & Booming Low-End

The biggest problem every amateur mix has is with the bottom range of the frequency spectrum. There's always too much bass and it's blurring and bleeding into its various instruments.

The bass ends up getting louder and louder because the mixer wants to hear clarity but can't achieve it in any other way.

You can summarize this issue with two points:

- There's not enough separation between the bass and kick

- There's not enough separation between the bass region and mid-frequencies

Let's deal with both issues right quick!

Bass and Kick Separation

We've previously covered in-depth how to deal with the separation of bass and kick. You can study that later for your advanced practice, but in the meantime let's cover the basic concepts.

You need to make a choice either before tuning and selecting your kick and bass or after the selection has been made.

One of them needs to own the lowest part of the bass register and one of them will own the higher part of the bass region. Neither is better than the other, but what is appropriate will be determined by the genre for the most part.

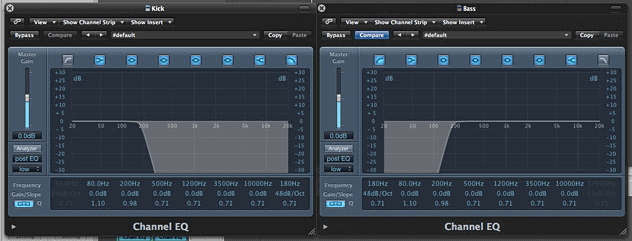

EQ Roll-Offs

Your weapons here are equalization and side-chaining compression for ducking. For EQing, you'll use some roll-offs to create separation.

For instance, if the bass guitar is the lowest frequency instrument and the kick drum is the higher, then you might roll off some of the lowest frequencies on the kick and roll off the higher region of the bass even more lightly since it has more sustain and isn't so transient.

EQ Notching (Boost & Cuts)

Then you'll identify the thin frequency ranges on each instrument that are giving them their unique sonic footprint. So say on the bass that 80 Hz is giving the bass it's character. You might give it a slight EQ boost (or none at all) at 80 Hz with a thin Q.

Then you'd go to the kick drum and give it a slight EQ cut at 80 Hz. You'd do the opposite process to carve out a space for the kick as well. This allows the two instruments to play at the same time and maintain their clarity and character.

Compression Side-Chaining for Ducking

The final technique that's not always necessary is to apply a compressor to the bass and side-chain it to the kick drum. This makes it so that the compressor only triggers when the kick drum plays. So then you'd set the compressor up to slightly reduce the volume of the bass only when the kick drum hits.

This means that the bass is ducking out of the way of the kick momentarily. You don't want to go too hard here because you'll create a sensation of "pumping" out of the bass that will be noticeable.

If you use this technique, your goal is to have it not noticeable to anyone who doesn't realize it's happening. Only you should know.

Low-End and Mid-Range Separation

Amateur "dummy mixes" suffer from a lack of separation between the entire bass region and the mid-range of the song.

Even if some get lucky with choosing the right one-shots or samples and achieves separation of kick and bass, the entire bass region still bleeds over into the mid-range and the whole song ends up sounding muddy and unclear. Let's fix that.

We're going to use roll-offs again. All of your higher frequency instruments can be rolled off around 250 Hz or you can even go aggressive and use a high-pass filter. Then you can take your bass instruments and cut off all of their harmonics at 500 Hz or higher.

Unless you're applying distortion to the bass purposefully, there's no need for the bass or kick to reach any higher than 500 Hz in general, give or take. What you've just achieved is a complete separation of the bass region and the upper region while minimizing the bleed-over of either into the mid-range.

Now you've got separation but still lacking some clarity. The mix sounds muddy and boxy... too warm. This will happen regardless but it especially happens in amateur recordings in small rooms with no acoustic treatment. So what we're going to do is use a wide Q and start cutting on the EQ on these mid-range instruments.

Sweep up from 200 Hz up to about 550 Hz on each and you'll find the sweet spot where each becomes clearer. Don't be too aggressive on each individually. Always un-solo the track and compare against the full mix. A slight cut on each instrument will add up in the full mix.

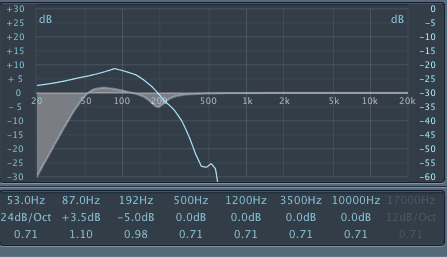

Here's an example of muddiness found at 200 Hz:

At this point, the one thing your mixes will have going for you is a crystal clear bass section that doesn't have to be cranked up to volume 11 to be heard properly.

2. Ear-Piercing Treble Imbalance

The second biggest problem with dummy mixes is the high frequency region. After cranking up the bass volume to hear more clarity there, you have to turn up the treble to get back that crystalline shimmer again.

Before you know it, your song has zero mid-range and is a complete mess. To make matters worse, amateurs learn about crap like aural exciters and have no clue how to deal with sibilance.

The problem here usually lies with the vocals, the hi-hats, and the occasional cymbal crash. Each might have a harmonic or a nuance that creates a very bright peak at specific frequencies.

This will pierce into the human brain and transform it into The Hulk. Nothing will make a song completely and absolutely unlistenable than this problem. The solution, besides your normal EQ practices, is using a de-esser.

A de-esser is a compressor that targets specific frequencies. For instance, sometimes your vocalist will create a screeching "S" sound that's very pronounced once recorded. With the de-esser, you'll sweep and find that frequency and target it with the compressor functionality.

Now, any time that frequency pops above the threshold it will be reduced in volume to a listenable level. You can do the same with any high-frequency instruments.

Also, amateurs aren't always testing their mixes in different environments, which wouldn't be necessary in a properly treated listening room, but very few have access to that. A mixer might put a shelf boost across the high-end because the mix doesn't feature the sheen and shine they are used to. Except it does.

They just can't hear it in their room on those crappy computer speakers doubling as monitors. So they boost that region and anyone listening to their song in a balanced environment will now hear the high-end at twice the volume, rendering it unlistenable.

This type of compensation can be solved by cross-monitoring on more than one set of monitors, by mixing with both monitors and headphones, and by bouncing MP3's to check in the car and the living room, etc.

The most basic issue may be that they haven't set up their monitors in the right way to create a listening position ripe for mixing.

At this point, you have a clear bass region, non-muddy middle, and crystal clear high-end. What else can be wrong? Lots, unfortunately.

3. Over Compressed With No Dynamics

Songs need to breathe to create a push and pull sensation for the listener. Brains and ears need a break to chew threw what's being thrown at them.

While a lot of this should be done in the arrangement and orchestration, mixers will destroy any remaining remnant of dynamics in the mix. Almost every mix you hear in pop music these days suffers from this problem and they trained listeners to like it, sadly.

Beginner mixers will be tempted to compress the ever-living life out of every instrument. The illusion is that this brings clarity where they can't achieve in the equalization stage (which should come first).

It's just like turning up the bass to hear the clarity. What happens if you compress everything separately and then end up trying to do some at-home mastering and compress everything even more.

You end up with a dead, life-less, shell of what was once a decent song. There's no solution to offer here other than to tell you to stop compressing so much.

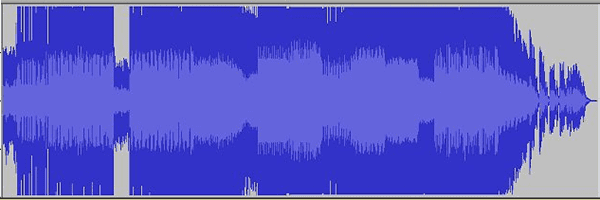

Another reason this happens, in addition to emulating pop music, is that amateurs tend to mix with their eyes instead of their ears and mimic mastered waveforms even though their own song isn't mixed yet, let alone mastered.

Mix with your ears and whenever you reach the level you think is good enough on compression, back off just a tad. Beginners over-do everything.

Until you're really good at mixing, a good rule of thumb is to get where you like it and then back off some... and don't add a mastering plugin on the master output.

4. Too Much or Too Little Panning

Your mix is shaping up very nicely. It's clean and clear and breathes and lives. But it's scattered across the soundscape in all kinds of crazy ways because you aren't quite sure what to do with panning.

Or you leave panning alone because you'd rather play it safe and you end up with a mono mix in a stereo world. You want to pan and you're far better off with wrong panning than no panning.

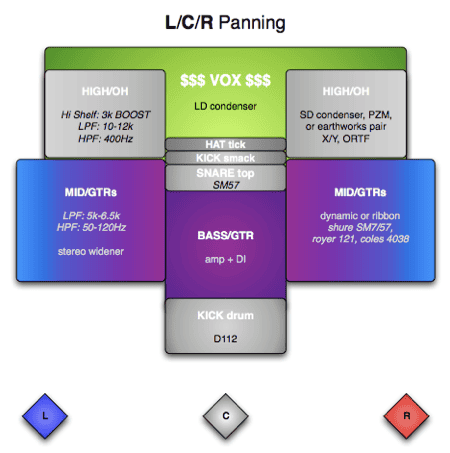

You've got two types of panning in the mixing world and picking a method largely depends on how thick the orchestration is in each particular song. You've got the "spread it evenly" crowd and you've got the "left-center-right" crowd. I'm finding myself slowly switching over to the LCR crowd.

Keep Bass, Kick, and Leads Centered

For both approaches, there are certain instruments you don't want to pan. You want them anchored in the center. The first is your bass and kick because they require a lot of power to be heard. If you pan those all crazy, you're running the risk of busting your listeners speakers.

And it's just confusing because the ability for the human ear to locate bass sounds spatially is not very developed. You want to keep your lead vocals, guitar solos, and anything else that is the focus of attention right up the middle.

Double takes, emphasis takes, harmonies, etc... those can be panned around. But keep the lead takes in the center.

Spread With Symmetry

If you push one instrument left, push another similar instrument to the right. If one is louder or heavier, then you can pan the other one less or more to balance out the "weight" of the first. It's an art, not a science, so feel it out. There are no hard rules for this.

Here's an example. You'll typically want your snare centered or barely off to the left or right to make room for the vocals. But you might want as wide of a snare as possible, so you double it, pitch shift one by a few cents to avoid phase problems, and pan one hard left and the other hard right.

You've maintained symmetry, instead of panning one 100% to the left and the other 33% to the right. That would sound crazy for the most part.

Left-Center-Right Mixing

The "spread it evenly" crowd does all we've mentioned. But there's a school of mixing that largely deals only with hard left, perfect center, and hard right when panning.

There's only two exceptions to the rule, which is percussion and reverb. The bass, kick, snare, and vocals are right up the middle. The lead guitar might be hard left and the rhythm guitar is hard right.

Meanwhile, the toms and hi-hats are trickled through out the empty space and the reverb is allowed to fly through that inner space. This creates a huge spaciousness and provides the most width possible.

This isn't how you always want to use reverb, but for LCR mixing with sparse arrangements, it's amazing. See the image below for more clarification on this concept.

This is a great way to get around some equalization problems you just can't fix due to the source material.

5. Rare Audio Mixing Scenario: Phase Issues

Most amateurs simply don't realize phase cancellation exists. They only encounter it after getting a good hold on panning.

Sometimes the issue is in the source material because the tracking engineer goofed up on mic placement, but most of the time it's because the beginner mixer created it by doubling tracks and panning or just not catching it from various instruments.

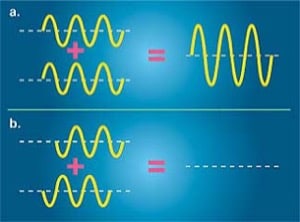

To fully understand this requires some knowledge of wave physics. The basic idea can be understood by everyone though because we've all been in the tub.

Sometimes two waves in the tub or swimming pool collide with each other and cancel each other out (with a splash of left-over water/frequencies bursting forth).

At other times, a faster wave will catch up to a slower wave moving in the same direction and they join forces to make a double strength wave. Suddenly that wave just got a lot bigger.

That's called constructive and deconstructive interference. Two waves either help each other or hurt each other, with the fullest extend being doubling or fully cancelling each other out.

The fix to this is to listen for it. You'll hear weird spikes or dips in the frequency range in instruments recorded in stereo.

Listen to your stereo tracks in solo mode and drop them to mono on the master track. If you hear a boost or cut in the frequencies anywhere, that's a phase problem.

The fix is to change the wave pattern of either the left track or right track at the problematic frequency region. It's not always fixable. The best way to fix it is to avoid it altogether in the recording stage.

But if you find it in the phase problems in the mix, you can try one of two approaches:

- pitch shifting one side of the recording by a matter of cents.

- moving one side forward or backwards in time by a few milliseconds.

These will be noticeable and can even be a feature of the mix. It will be less noticeable than a phase issue.

6. Basic Audio Mixing Mistake: Vocal Volumes

Don't feel bad at this one. Even the pro's suffer from not finding the right placement for the volume of the vocals. The problem arises from familiarity and knowing how to mix vocals so well that you lose perspective..

Once you know the words of a song, you can make them out with much more ease than a first time listener. What happens is you end up having them at a lower volume than needed.

The solution is simple. Bounce three separate versions of the song and label them Vocal Up, Vocal Down, and Vocal Middle. The "Middle" version is the one you think is just right. The others feature the vocals up (my suggestion is by 2dB) and the vocals down in volume.

Then you just ask several people what they think, preferably a professional and then some non-professionals who will go off of intuition and experience rather than analysis.

7. Audio Mixing Blunder: Misaligned Tracks

I hate this with a passion. It happens to the best of us. Sometimes misaligned tracks sneak into the final mix and this happens for a variety of reasons:

- the software glitched and shifted one track by 300ms or so, for example.

- the mixer couldn't snap to grid and had to place tracks (like the chorus) by ear and eye

- the recording collaborators didn't send stems to each other and had to place tracks by ear or eye

You've heard this undoubtedly on some of your favorite records. The worst I've ever heard is the entire vocal track (all three versus and choruses) being off by as much as half of a second.

This happens when the mixer studies the projects open in the DAW, thinks they are good, and bounces them and never listens again.

If the computer screws up, you'll never know. You have to listen to the bounced files and listen very carefully!

The other issue is that the mixer receives files that can't be synced up to the grid in the DAW to an established tempo for whatever reason. So when they have to copy and paste regions, perhaps the chorus vocals for example, they might misplace them by 200ms.

They don't notice it, but the fans that hear the song over and over will definitely notice. All you can do here is be very careful and get approval from the original artist. They will be the first to notice the issue.

When collaborators send files across the internet, the temptation is to bounce a rough mix and send that off so someone else can add guitar or vocals.

Say your job is to play a synth solo over the bridge, so you record it and then send the final take back to the original artist. But you only leave about 5 seconds of silence in front of the synth.

This forces the original artist to have to try to place your synth with the perfect timing. The solution here is to send stems. Stems start at the beginning of the song, even if that means you'll have 3 minutes of silence before the synth solo begins. No more placement issues and misaligned tracks!

8. No Familiarity With Your Listening Gear

Not having an acoustically treated room is a bummer. But it's a hump you can get over and still pump out perfect mixes.

The problem that arises from not knowing the characteristics of your room, your monitors, and your best studio headphones means that you'll over- and under-compensate all over the place to make up for their problems. And this always has double the effect on the regular listener in their own listening environment.

You always want to create the most neutral mix possible, because you can't predict how and where your listeners will be when they turn on your music.

Your first goal is to realize... your room is small, which means you're going to hear extra bass. There's also no treatment so you're going to hear all kinds of reflections coming off the walls, creating phase issues that may not really be there in the recording.

You've got to learn the nuances of your room so you aren't destroying your mix by fixing problems that don't exist in the mix.

The same goes for your headphones and your monitors. Once might have more of a bass response than the other. Same goes for the high-end or any other frequency range.

By creating a mix and studying the result in the car, in small ear buds, in your best studio monitors and headphones, and in other rooms, you'll start to figure out what problems are arising from your mixing gear and environment. Then you can compensate for them properly.

Audio Mixing for Dummies - Conclusion

If you're dealing with these issues, then let me give you bad news first. You're a newbie. The good news is that these problems are extremely easy to overcome thanks to this article, Audio Mixing for Dummies. Read it again if you need to.

Read the other links we provided. And most importantly, experiment and practice. In no more than a week's time you'll graduate to intermediate and your fans are going to be like "holy cow, bro."

You might even get gung-ho and go back and fix some of your old mixes of the fan-favorite songs. The options are endless once you know what you're doing!

One thing is for sure... You're capable! It just takes a little knowledge. That's what LedgerNote is all about: sharing. Until next time!